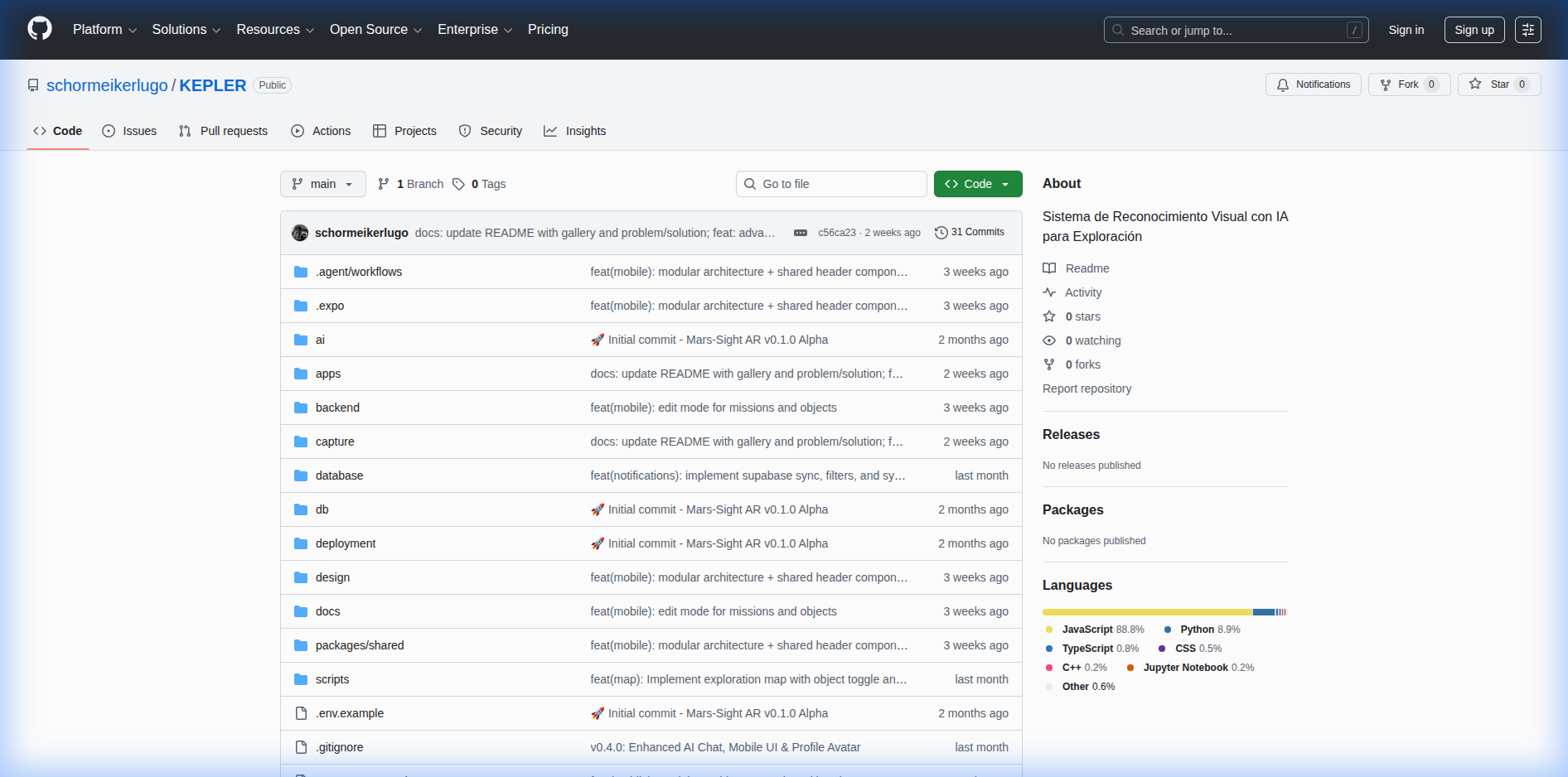

KEPLER

KEPLER is a real operational platform powered by Artificial Intelligence, designed for field exploration. Inspired by telemetry systems and space HUDs, it serves as a tool for resource discovery and geological analysis. The ecosystem comprises an ultra-lightweight Control Center (Desktop) connected to a Python backend for computer vision (YOLOv11), a native Mobile Field Unit (React Native) for real-terrain exploration, tactical 3D satellite map widgets, conversational chat with local LLMs (Llama 3/Mistral) for scientific assistance, and a distributed database system (Supabase) that operates even without internet connectivity.

Development — AI

> kepler@mission-control:~$ diagnostics --run ERROR: Exploration of unknown environments faces a gap between human perception and available analytical intelligence in the field. Raw data without context: the human eye observes but cannot identify or classify in real time. WARN: Intermittent or no connectivity in remote areas. Sending data to the cloud means waiting hours or getting no response. Fragmented tools: GPS in one app, camera in another, notes on paper. CRITICAL: Without structured and immediate recording, findings are lost and never correlated with previous observations. 21st century technology with 20th century workflows.

KEPLER bridges the gap between human perception and field intelligence through a platform that runs YOLOv11 models directly on the explorer's device, capable of identifying and classifying minerals in milliseconds without relying on internet connectivity. Its Cortex AI module, powered by Mistral 7B running locally, goes beyond detection: it analyzes each finding in context with the complete exploration history, enabling the user to query relationships between samples from different missions through natural language. Every discovery is automatically geolocalized on tactical 3D maps that combine GPS coordinates, satellite imagery, and AI-generated terrain descriptions, revealing distribution patterns that the human eye cannot detect on its own. The entire ecosystem operates in two complementary modes — the Desktop Control Center for mission planning and the Mobile Field Unit with augmented reality for terrain execution — with real-time synchronization when connectivity exists and full offline operation when it does not, ensuring no finding is ever lost regardless of environmental conditions.